Production multi-tenant SaaS for marketing agencies — AI-drafted contracts, approval workflows, and end-to-end client billing.

Three-runtime TypeScript monorepo for marketing agencies — Next.js 16 web, NestJS API, BullMQ worker. Designed and shipped solo on Railway.

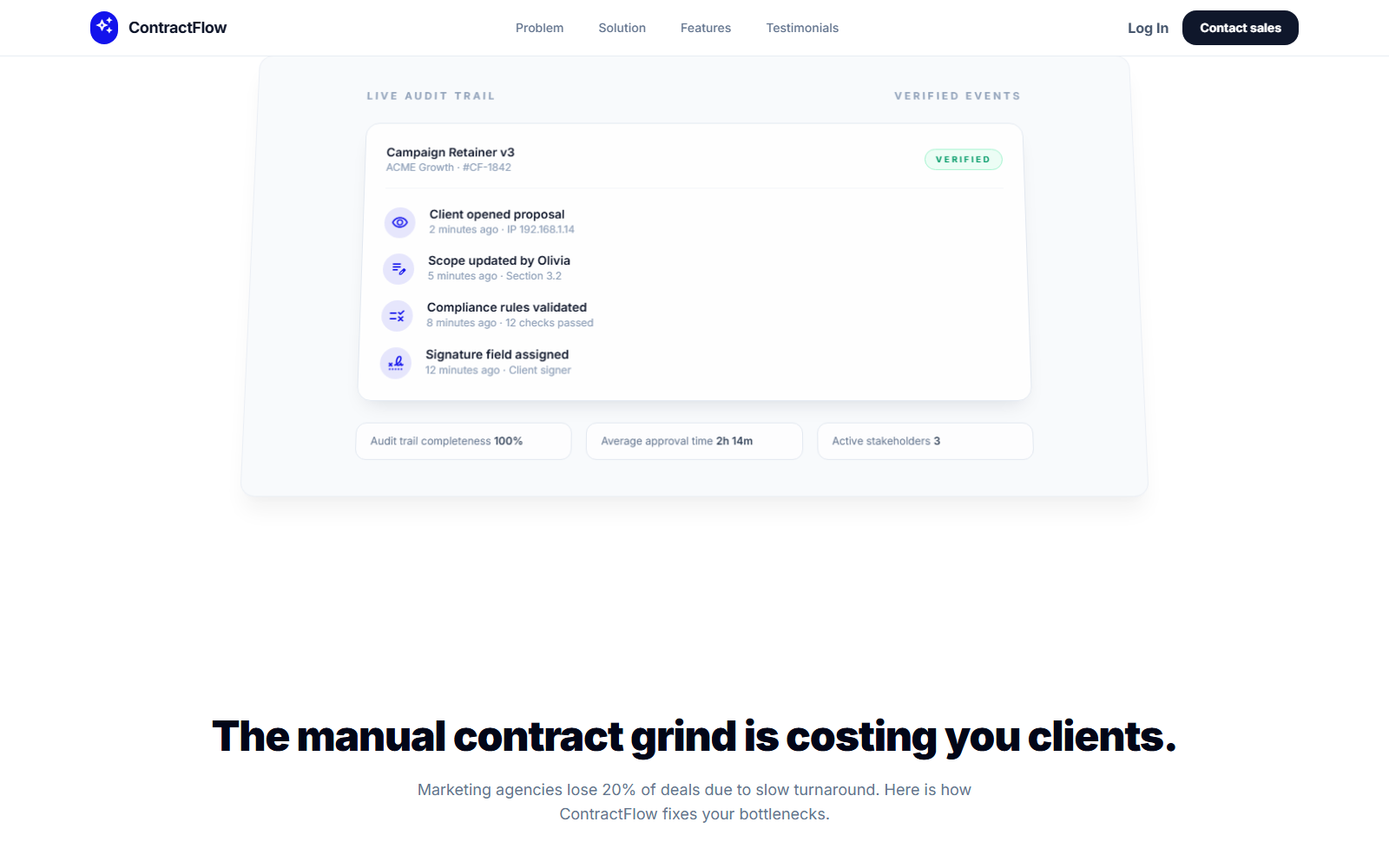

- Multi-tenant organizations with 4-tier RBAC (Owner / Admin / Member / Viewer); 5-state document workflow Draft → Review → Sent → Signed → Paid.

- Streaming AI drafts over Server-Sent Events with provider failover (Google Gemini → OpenAI), generated by an async BullMQ worker.

- Custom HMAC-SHA-256 sessions, nonce-based CSP, Cloudflare Turnstile bot protection, Redis sliding-window rate limits, Paddle billing across three tiers.

- Multi-stage Docker, Caddy with automatic TLS, GitHub Actions CI/CD, Sentry monitoring.

ContractFlow runs as three independently scalable runtimes coordinated through Redis: a Next.js 16 web app handling SSR and middleware, a NestJS REST API with 12 domain modules (auth, audit, clients, documents, jobs, leads, notifications, org, projects, public-documents, templates, health), and a BullMQ background worker for AI generation and email. Shared Zod schemas in `packages/shared` keep types and validation consistent end-to-end. Postgres holds 18 Prisma models including Document with three independent state machines (status / approvalStatus / generationStatus). The web app rewrites `/api/*` to the NestJS service, served behind Caddy with automatic TLS on Railway.

Custom HMAC-SHA-256 sessions over NextAuth

Wanted full control over session shape, rotation, and revocation. timingSafeEqual on the cookie payload, paired with nonce-based CSP using strict-dynamic, removes a class of XSS-amplified hijack attacks NextAuth doesn't address out of the box.

BullMQ worker for AI drafting, not inline

AI generation can take 5–30s with provider failover (Gemini → OpenAI). Holding an HTTP request open that long is a recipe for timeouts. Persisted DraftJob state machine + 3-attempt exponential backoff + worker heartbeats means the API responds in <100ms while the work happens elsewhere.

Provider failover over Server-Sent Events

Streaming AI drafts via SSE feels alive. Wrapping that in a provider-agnostic facade (Gemini default, OpenAI fallback, deterministic placeholder for total provider outage) means the user always sees progress, never a hung spinner.

Multi-tenant orgs with 4-tier RBAC

Owner / Admin / Member / Viewer is the right amount of granularity for marketing agencies. Less and you can't separate the founder from a junior writer; more and the permission UI becomes its own headache.